Weekends can be filled with activities that are beneficial for both the physical and mental health.

Read More

Some sweeteners have better health benefits than sugar when combined with coffee.

Read More

Called "Earn Your Wingz", the challenge requires participants to consume 15 chicken wings coated in a sauce containing Carolina Reaper pepper.

Read More

Now, the food has evolved to become increasingly bizarre.

Read More

A cat in China has caught the public's attention after lighting the stove in its owner's kitchen on fire.

Read More

Before it turns into depression, there are several symptoms that need to be recognized.

Read More

Comedian Nish Kumar has shared that he wants to play James Bond in an "elaborate farce".

Read More

These hilarious cat jokes and quotes will surely make you laugh out loud and remember the funny antcis of our beloved feline friends.

Read More

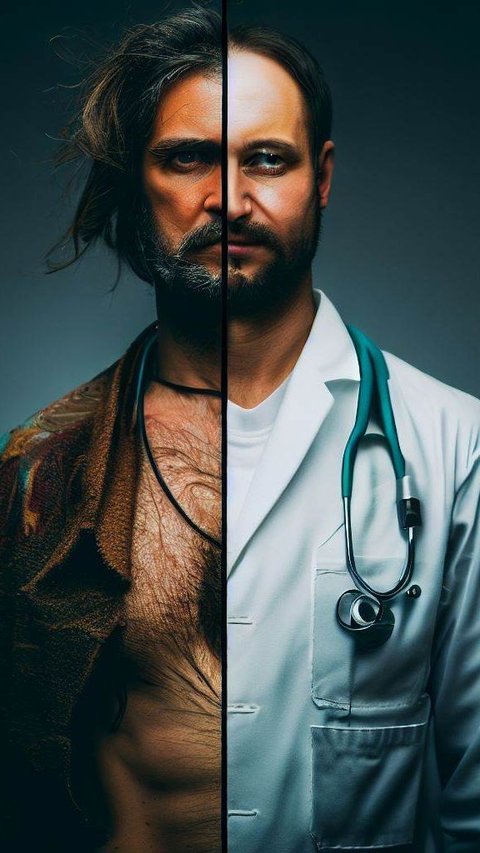

A number of terrifying treatment methods from the past are still applied in modern medicine.

Read More

Linkin Park is rumored to be considering a reunion tour in 2025.

Read More

The unique car was inspired by the Drag-U-La coffin car featured in a 1965 episode of The Munsters television series.

Read More

Do you know that chewing gum has several benefits for your health? From protecting tooth to relieve stress, here are some surprising benefits of chewing gum.

Read More

Proper preparation before running can prevent injuries and maximize performance.

Read More

A number of foods and drinks are apparently not supposed to be consumed together with tea and coffee.

Read More

These great and iconic high school movies will take you back to your high school life.

Read More

Before drinking coffee in the morning, there are several things that can be done to prevent problems.

Read More

Getting children used to drinking water needs to be done since they are young because of its health benefits.

Read More

Paris Saint-Germain or known as PSG has several unique facts you should know.

Read More

Cyprus is a paradise island located in the Mediterranean Sea and a famous summer holiday destination. Here are the top 6 places to visit in Cyprus this summer.

Read More

Arguments and drama usually occur when trying to calm down a tantrum child.

Read More

Though she is known for her role on the TV show "Friends," she has also starred several comedy and drama movies.

Read More

Sunscreen is important to be used on the entire body, including covered areas.

Read More

When life gets tough, it can feel like walking through a dark tunnel with no end.

Read More

The condition of an itchy nose can be caused by allergies, irritation, respiratory tract infections, polyps, or nasal tumors.

Read More

It is important to engage in sports that can be beneficial for tightening the female organs.

Read More