Amidst the struggle, there are addiction quotes that resonate with the pain of addiction. These addiction quotes serve as a guiding light.

Read More

You can find many things at the Mercado Republica de San Luis Potosi market in Mexico.

Read More

Madison Beer became famous in 2012 when Justin Bieber shared one of her YouTube covers.

Read More

Let them guide you to a deeper understanding of trust in your relationships.

Read More

Finding the right horoscope match for a Libra can lead to a fulfilling and loving relationship.

Read More

These movies about loneliness offer poignant portrayals of loneliness in various forms.

Read More

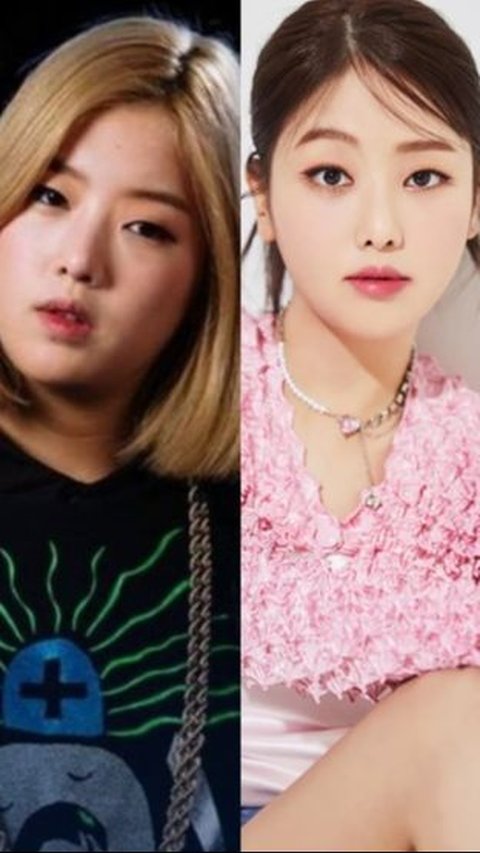

Coming back with the latest single, Kisum has amazed many people with her new appearance.

Read More

Let's explore these best cities to live in the USA and find your perfect place to call home!

Read More

The rapper shows his interest to buy one of the most popular platform via thread on X.

Read More

These quotes will help you navigate life's journey.

Read More

In fact, this announcement can be seen in users flipside accounts.

Read More

These Christian birthday wishes are more than words—they are heartfelt prayers for God's blessings upon your friend on their special day.

Read More

Apple will include original calculator app in the upcoming iPad product.

Read More

A woman in South Korea lost more than $50,000 to a scam.

Read More

Let’s explore this world of stupid quotes and laugh. Maybe there is hidden wisdom to teach us.

Read More

The couple is now encouraging others to do the same thing as them.

Read More

Feminists fight for a world with equal rights, opportunities, and respect.

Read More

These hottest and most attractive female pool players prove that beauty and skill can coexist globally.

Read More

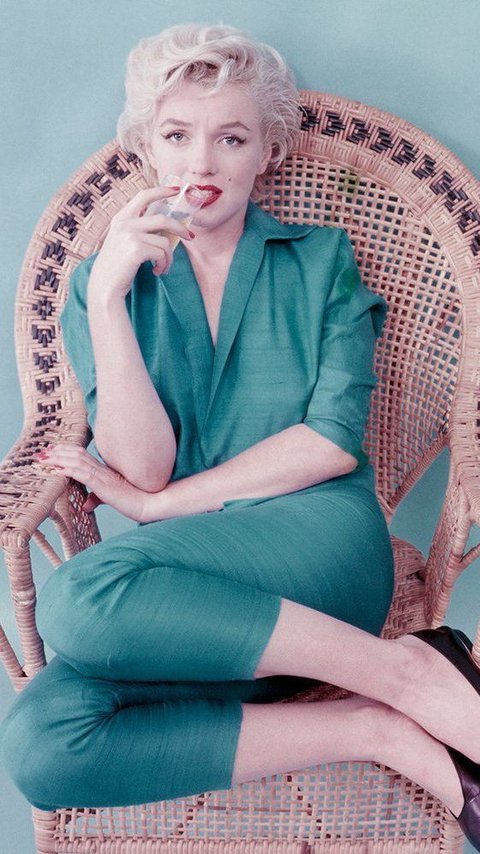

She loves to look glamorous and is famous for her beauty.

Read More

People close to Zendaya and Tom Holland say that marriage is a topic of conversation for them.

Read More

Thursdays offer a perfect opportunity to pause and reflect on the blessings surrounding us.

Read More

Doctors believe that she may have borderline personality disorde.

Read More

FinanceBuzz announced that it is celebrating the upcoming Star Wars Day.

Read More

An extremely rare blind vole has been spotted and photographed in the Australian outback.

Read More

Are you a dog lover or just looking for some good laughs? Here are some paw-some dog jokes that will brighten your day.

Read More